Neural Machine translation, or NMT, is a fairly new paradigm. Before NMT systems started to be used, machine translation had known several types of other machine translation systems. But, as research in the field of artificial intelligence is advancing, it is only natural that we try to apply it to translation.

History of Neural Machine Translation

Deep learning applications first appeared in the 1990s. They were then used, not in translation, but in speech recognition. At this time, automatic translation was started to regain momentum, after almost all studies on the subject were dropped in the 1960s, because machine translation was believed to cost too much for very mediocre results.

Rule-based machine translation was then the most used type of machine translation, and statistical machine translation was starting to gain importance.

The first scientific paper on using neural networks in machine translation appeared in 2014. After that, the field started to see a lot of advances.

In 2015, the OpenMT, a machine translation competition, counted a neuronal machine translation system among its contenders for the first time. The following year, it already had 90 % of NMT systems among its winners.

In 2016, several free neural MT systems launched, such as DeepL Translator or Google Neural Machine Translation system (GNMT) for Google Translate, for the most well known, which you can see compared here.

How Neural Machine Translation Works

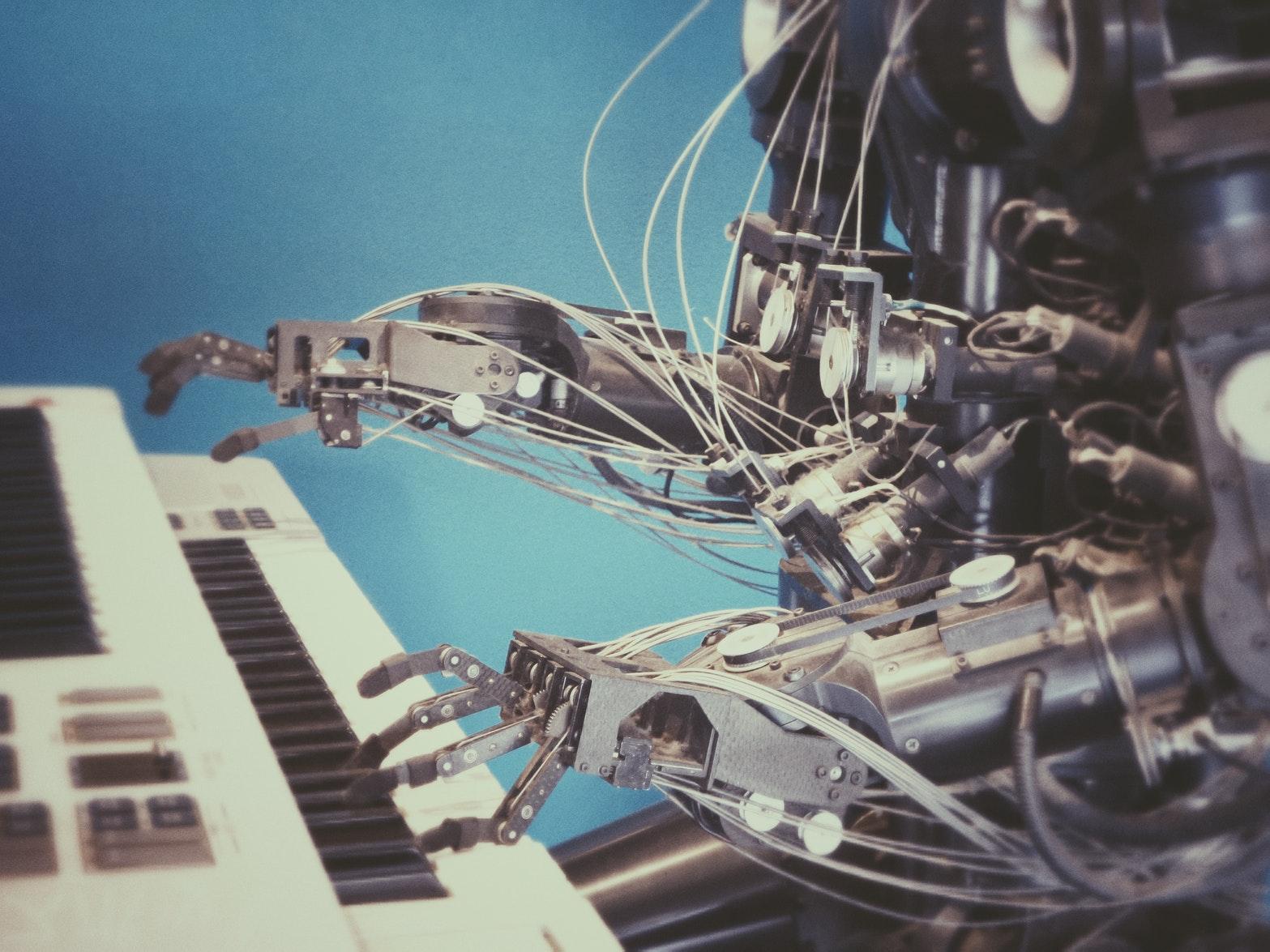

These NMT systems are made up of artificial neurons, connected to one another and organized in layers. They are inspired by biological neural networks, capable of learning on their own from the data they receive each time someone translates a new document. The “learning” process consists in modifying the weight of the artificial neurons, and it is repeated during every new translation, to constantly optimize the weights, and thus the quality of following translations. NMT systems work with bilingual corpuses of source and target documents that have been translated in the past.

The translation itself works in two phases.

First, there is an analysis phase. The words of the source document get encoded as a sequence of vectors that represent the meaning of the words. A context is generated for each word, based on the relation between the word and the context of the previous word. Then, using this new context, the correct translation for the word is selected among all the possible translations the word could have.

After that, there is the transfer phase. It is a decoding phase, where the sentence in the target language is generated.

Deep Learning: Better, but Still not Perfect

Even though deep learning systems are the best machine translations systems to exist yet, they are not perfect, and cannot completely work on their own. As languages are being used every day, they are constantly evolving. Therefore, deep learning systems always need to learn, especially neologism and new expressions. And to learn about these new elements, they will always need the help of humans, whether it be to work on the systems directly, or to perform post-edition on translated documents.

Nonetheless, systems who can “learn” on their own represent a massive improvement, not only for machine translation, but also for any natural language processing tasks, as well as for artificial intelligence in general.

Neural machine translation still needs research and improvement, for sure. But it does represent a bright future for machine translation. Of all the people reading this article, most will have used a neural machine translation system before, whether knowingly or not. And if you actually haven’t, there is a good chance that you will at least try one now, for example: DeepL Translator.

Thank you for reading, we hope you found this article insightful.

Want to learn more or apply to the TCloc Master’s Program?

Click HERE to visit the homepage.

Thanks from the Tcloc web team

Comments

HARUSHIMANA | May 20, 2019 21:49

Thank you Very much for the information that you provided us it is useful. now I understand the evolution of transportation using technology